Having explored baseline inpainting, dimension fixes, and ControlNet’s structural guidance, we still lacked the domain-specific knowledge to perfectly restore black-and-white Japanese Spitz fur.

That’s where Low-Rank Adaptation, or LoRA, enters the picture. We can train a relatively small set of parameters to inject new knowledge into an existing Stable Diffusion model, rather than performing an expensive, full-scale fine-tuning.

Adapting this concept to SDXL in a targeted, industry-specific way presents significant technical challenges:

- Two text encoders: SDXL features a base and a refiner stage, each with its own text encoder. Our standard DreamBooth-like scripts generally assume only one.

- Recursion issues: Integrating LoRA into every relevant part of the UNet architecture can create unexpected loops and hidden complexities.

- Massive VRAM requirements: Even though LoRA is lighter than a full fine-tune, SDXL’s larger architecture demands more memory than typical SD 1.5 training.

This article reveals how we overcame complex technical challenges in image processing, including recursion issues and dual text-encoder management. Our solution, though still evolving, opens up new possibilities for advanced fur detail restoration in Spitz dog images.

Why LoRA for Spitz fur?

Before diving into the code specifics, let’s clarify why LoRA is so critical for a niche domain like black-and-white fur:

- Lightweight parameter set: Instead of updating all UNet weights, LoRA fits rank-decomposed matrices (rank=4, 8, etc.) into attention layers or linear transformations.

- Faster training: With fewer trainable parameters, you need less memory and time to converge.

- Retains base knowledge: The underlying model’s general photography sense remains, so we only tweak it for our specialized domain. In this case, the detailed hair geometry of black-and-white Spitzes.

However, the gains can be overshadowed if the model architecture is unaccountably large, or if you have mismatched assumptions about shape sizes, text encoders, and more. That’s where the real fun begins.

DreamBooth-Style LoRA: The two-encoder conundrum

Traditional DreamBooth approach

DreamBooth is an AI-powered technology that personalizes text-to-image models, allowing them to generate unique images of specific subjects in various contexts. It works by fine-tuning existing models like Stable Diffusion with just a few images of the desired subject, enabling the creation of highly customized and realistic images.

Our first attempt was a script reminiscent of DreamBooth, which typically:

- Loads a single Stable Diffusion pipeline (e.g., runwayml/stable-diffusion-v1-5).

- Replaces attention processors with LoRAAttnProcessor.

- Performs a DreamBooth-like training loop:

- Encode real images → get latents.

- Add noise, run forward pass, compute MSE with the noise.

- Update only the LoRA parameters.

For SD 1.5 or other single-encoder models, this script usually “just works.” But SDXL has a more multi-stage pipeline:

- A base encoder + base UNet.

- A refiner with its own text encoder, refining the image in a second pass.

Standard DreamBooth scripts ignore that second text encoder. If you try to point the script at SDXL, you either get shape mismatches, missing attributes, or partial training that does nothing for the second stage. For instance:

Or you might inadvertently skip the refiner encoder, leading to suboptimal or glitchy results in the final pass.

Partial solutions

Focus on the base model only: Some devs sidestep the refiner entirely. This can show workable results, but you lose the high-res improvement stage that makes SDXL special.

Double encoders: You might replicate the LoRA injection for both text encoders, but that implies doubling your parameter footprint. It’s still smaller than a full tune, but more than a single-encoder approach. You also have to orchestrate two sets of latents, noise, and scheduler steps carefully.

The DreamBooth script we shared is an admirable starting point for single-encoder Stable Diffusion. But SDXL’s second text encoder demands custom code changes and a more advanced pipeline structure.

BFS hooking: Taming recursion & overriding linear layers

BFS, or Breadth-First Search, is a method used in computer science to explore data that’s organized like a tree or a network.

Rationale for BFS hooking

After hitting the two-encoder wall, we tried a more raw approach: dynamically attach LoRA layers to every Linear module in the UNet or pipeline. We call it “BFS hooking” because we:

- Go through the UNet’s module tree level by level

- Identify each nn.Linear child

- Attach extra “lora_down” and “lora_up” sub-layers + a forward hook

Why BFS?

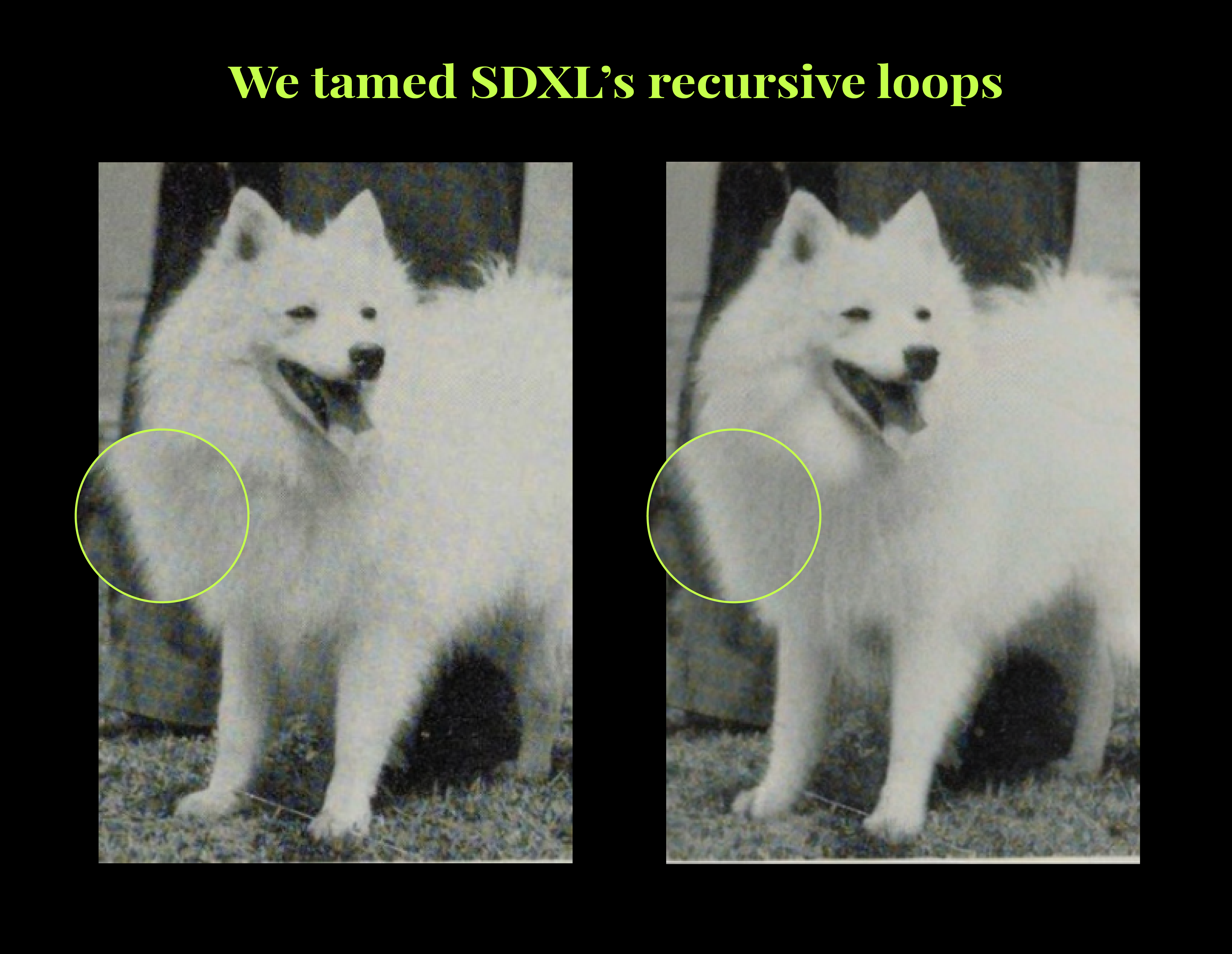

Avoid recursive loops: Some modules might reference each other or appear multiple times. With BFS, we maintain a queue and a visited set, ensuring we only process each submodule once.

Complete coverage: We don’t rely on the model naming scheme for attention layers. We capture any linear transform that might matter for cross-attention, self-attention, or feed-forward layers.

Handling recursion issues

If we used a naive DFS or simply iterated over .children() repeatedly, we could:

- Re-visit submodules multiple times.

- Potentially fall into cycles if the model references itself or if you’re tracing from multiple vantage points.

BFS plus a “visited set” ensures a predictable path:

The forward hook mechanism

Whenever a Linear layer processes an input x, the default output is linear_out = W * x + b. With LoRA, we want an extra term:

So the final output becomes:

Because we don’t want to rewrite PyTorch’s entire forward pass, we attach a forward hook:

Crucial: We must confirm that “input[0]” is the raw input to the layer (not the entire tuple of arguments). If your architecture passes multiple arguments, it can get trickier.

BFS hooking vs. LoRAAttnProcessor

This hooking approach is more brute force. Instead of only hooking attention blocks with a specialized LoRAAttnProcessor, we might:

- Inject LoRA into feed-forward layers.

- Potentially overfit or add parameters to modules that aren’t crucial for fur texture.

Upside: 100% coverage, a strong chance that relevant linear layers for Spitz fur are indeed “LoRA-enabled.”

Downside: More parameters, bigger VRAM usage, possibly slower training, or minimal improvements from hooking irrelevant layers.

Conclusion

In this first part, we’ve resolved some of the most pressing challenges in adapting LoRA for specialized tasks like restoring black-and-white Japanese Spitz fur. We’ve explored the intricacies of SDXL’s dual text encoder system and developed innovative solutions using BFS hooking to overcome recursive complexities.

As we move into the second part of our exploration, we face perhaps the most significant challenge yet: managing the enormous memory requirements of SDXL. With its larger architecture and higher resolution capabilities, SDXL pushes the limits of even high-end GPUs.

Stay tuned!

Leave a Reply